In the process of creating a new Cities of Things manifesto for 2026 and beyond, as a reflection of the state of Cities of Things, an explorative research project was started. Through interviewing 30 experts the first outlines of thinking about the shift from a cities of things with agency, towards living in a reality of immersive AI, where given more depth.

The State of Cities of Things

Living with things, part of the city fabric, as residents, as citizens. It was what we envisioned back in 2017, resulting in a set of dilemmas that still hold true in current relations with AI. But what has changed in that almost decade? Machine learning and AI became generative AI. And we evolve in a society where we increasingly partner with other intelligences. Both ‘in your face’ while using the gen AI tools for creating and even communicating as a coach or a shrink. Trust in the systems changed. Critique is also serious. Big tech has shifted attention to generative AI, and new big tech players have taken a defining role. OpenAI, Anthropic, and next to the existing ones like Google and Meta. And even if seemingly lagging behind like Apple, it is the core of the attention.

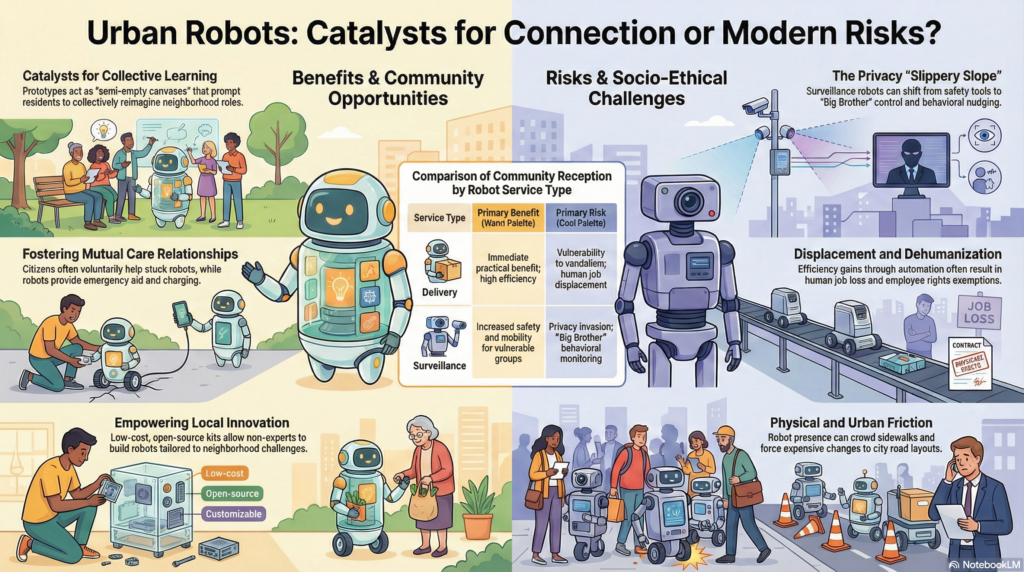

This all focuses on the backend, the infrastructure, and the screenbased interactions – with extension to other forms of communicating with images and sound. The physical angle is still early in development. Physical AI start to be a thing in 2026 for sure, and robotics is accelerating with humanoids and doganoids. It is still fresh and experimental.

You could say that these forms of physical AI are the fully embodied ones. Another form are the autonomous vehicles that get more and more agency to make decisions by themselves, operate with less instructions. The latest version of FSD (Full Self-Driving) is illustrative. Comparing videos over the years give you trust that the current version is capable to deal with all regular situations, and only has flaws in edge cases. You might even say that it still has human characteristics; it sometimes misses a sign or is confused. The forgiveness of humans is however quite different.

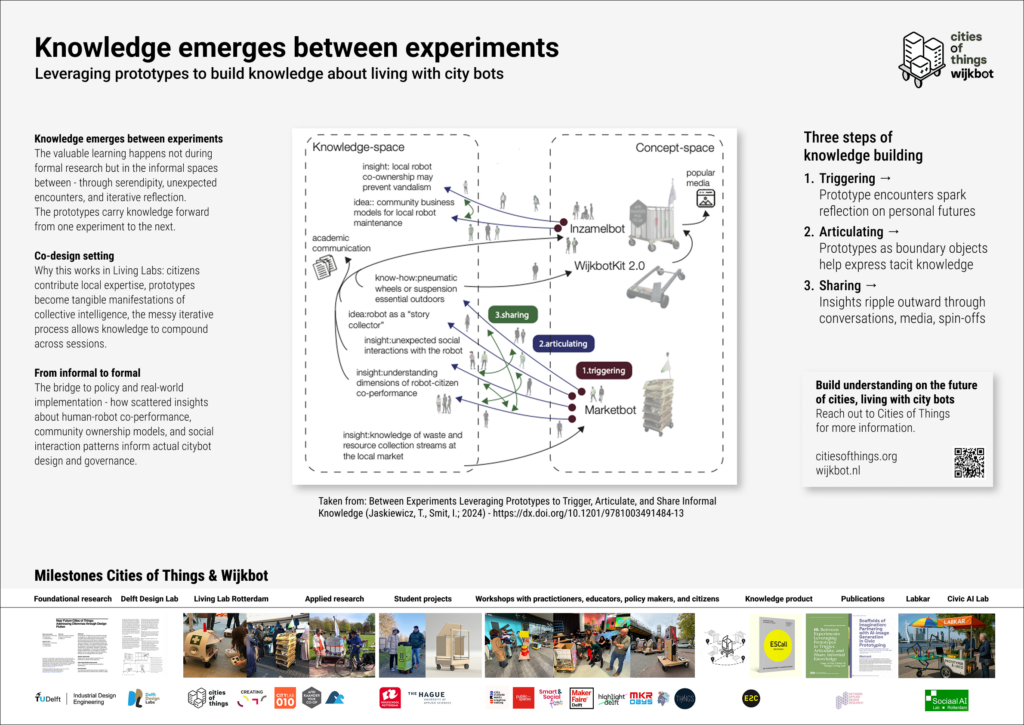

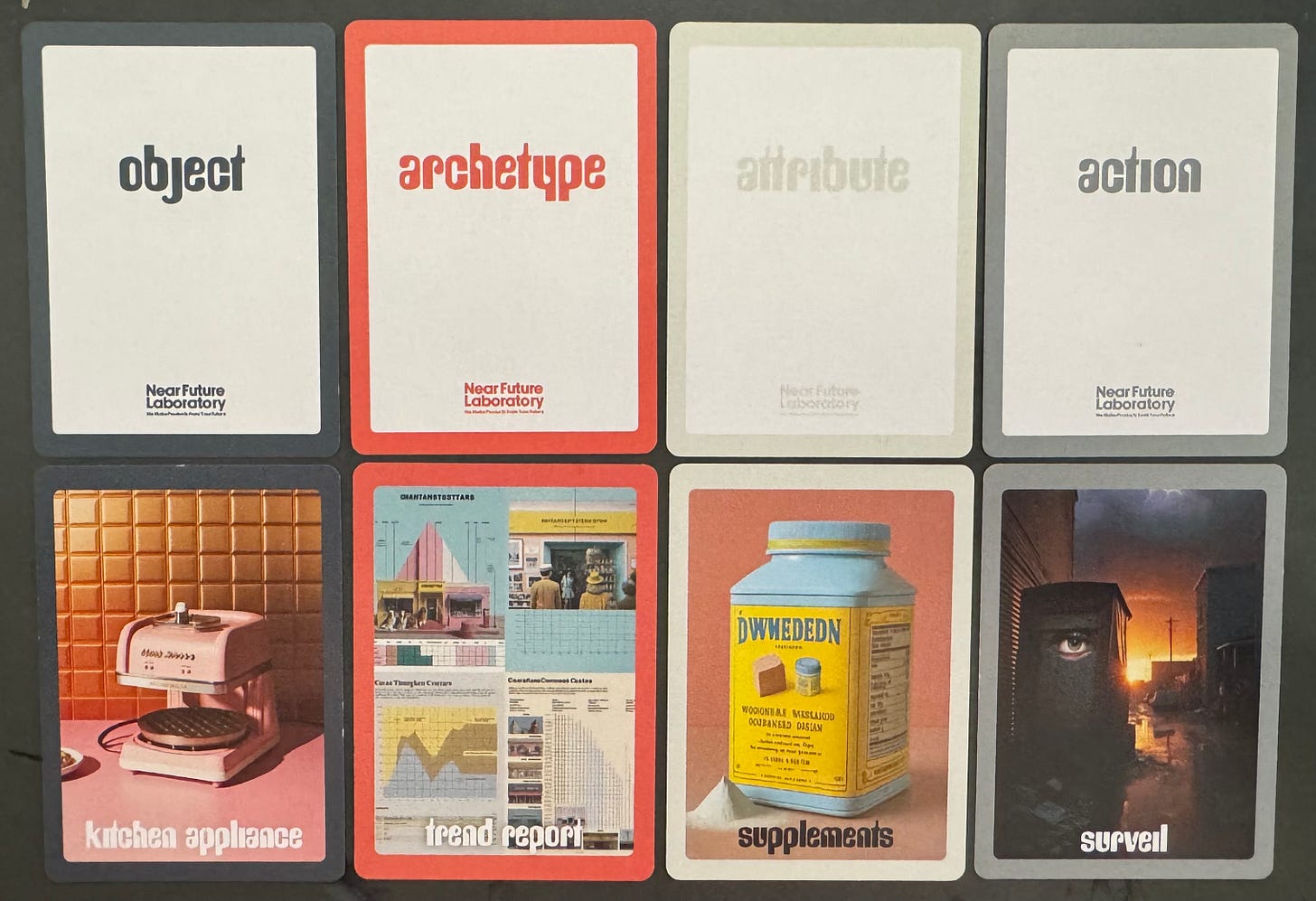

From the beginning of the Cities of Things research we think that things are not the same as objects. We like to see things more as assembles of meaning and behavior. With that, it can also be a static thing or system as a whole. In the thirty interviews that serve as the point of departure for reflecting on the state of Cities of Things, this notion is common. And it goes behind that, looking at the other intelligences and more-than-human actors and systems. New -or changing- forms of community and governance are a topic. And new types of infrastructure. One thing that we foresaw but was still very conceptual is the fact that these things that form the city are an assembly of layers of states, in the sense: the physical layer, the regulatory layer, the digital layer, the data layer, the object layer, the citizen layer, the relational layer. And within these layers, iterations happen, evolutions take place. The layers are responding to each other in different ways. Are entangled better said. How these entanglements are shaped differs and changes over time. A thing is, in that sense, a moment in time, entangling different aspects from the layers in a performative expression that is taking space in its environment. An abstract description, but in other words, the essence is that things are not static, given.

A story from a possible future

Before diving deeper, I like to sketch a possible future of the state of Cities of Things that encapsules some concepts that are discussed and we find important to unpack later, using the expert opinions.

1st act, the context

Everything that can be a computer will become a computer. Chips are flocking the things we use. In our house, in our cars, the phones were the first ones maybe, and are an enabling platform for those things that are still lagging behind. Things become sensors of their environment. Not for direct services, but at least for informing maintenance. The iPhone was introduced in 2017 as a marriage of a communicator, a music player, and an internet device. The word “communicator” is another word for “phone”; using it makes the narrative correct (a phone that is a phone sounds less interesting). The aspect that this device became a communicator and connector of all other aspects of our life, of our physical and digital realities, literally, might not have been foreseen back then.

It is a known change in society, in human interactions and daily life. We are disconnected from our direct reality and live more prominently in our own chosen digital reality. We all carry our personal bubbles and live inside these. It is seen as a problem for our social intelligence, for our healthy human-to-human connections. These can be separated, though; the phone’s enabling capability for creating new infrastructure and new types of embedded intelligence in a network. That is a capability in its own right. The other capability is serving as the hub in our social fabric. Social on all levels of friendship: from deep commitment to loosely one-time contact while doing a transaction, or even just an interaction.

The big shift that is happening now in the second half of the 20s is the extension of this enabling layer with AI and, more importantly, agentic capabilities. That is not a new function or system added, but an evolution of existing intelligent systems. Or better, of all existing nodes in systems, aka things. What might happen next is that these agentic capabilities will also get more agency. Not forced by the producers or providers, but grow from the opportunities on a functional level. We will have different conversations with the things we use, the services we experience. Services that can be more autonomous.

The next possible step is the forming of new networks, new communities, and new societies. Among the things, but especially among gatherings of things and humans. And other non-human lifeforms.

The weak signals are the digital systems and especially the agent-based assistants that have their after-work gathering. It is a meme, but one that might make sense.

Next to the autonomous things, the level of agency is increasing. Who is taking the agency? Are we delegating more and more out of convenience and losing agency without being fully aware?

Next step after agency per things, is a type of organizing layer formed by the things, the communication between things, creating their own governance structures, with or without humans in the loop.

The term humans in the loop is already an indication how this can evolve. A human in the lead or in the loop? Democratizing things? How far?

Some aspects that are interesting: What is driving this, and what are scenarios for this evolution? Who is the owner, and ownership Related to public spaces, governance, democracies? New infrastructures? Organizing scales (family, building, neighborhood, etc)?

2nd act, a story from the near future

In 2033, Apple is still organizing its yearly WWDC. And for the 2033 edition, people are already getting excited with the first announcements in April. There is expected to be a major update to the immersive device after it launched last year. It seems they have found their mojo again after years of downfall and doubts about their relevance in the current state of digital life.

The rise of a small player, such as Fairphone, in the late 20s was not to be expected. The pivot towards a community device, rather than a communication device, was so new that it shook the whole industry. The uptake from the mesh-based communication ate in the business of all players, and although Apple was not that affected in the first years, it started to compete with the 2029 introduced connector-hub-puck, as people started to combine this with all kinds of services in a new open platform that emerged from the mesh networks.

It was not a surprise that Fairmesh was acquired by a big player, but Apple is usually not in the business of acquiring complete service brands. With the rapid growth of Fairmesh, it almost felt like a reverse takeover, and, officially, it is a collaboration, not a takeover, with Apple adding the Fairmesh protocol and chip to their AirTag devices.

More about Fairmesh

In 2029 the small mobile phone company Fairphone introduced a new device that was aimed at local communities. Already in 2025, the first local initiatives to build mesh networks for communicating text-based messages began experimenting. On the one hand, it focused on disaster-based communication needs, a hot topic as the several wars were increasingly developing into a worldwide conflict. A pivotal moment was when mesh networks began connecting to edge devices, such as phones, enabling simple messages to be integrated into richer content through smart use of AI. With the latest open source models with a smaller footprint, it became common to download AI models to personal devices and build a local personal assistant.

The device that Fairphone introduced used a low-end computing architecture, and the core was the chip for the mesh-networking aimed at peer-to-peer. As they had integrated this chip also in the existing phones, they kickstarted a local-based network. And they offered a device for local communities, a kind of router/antenne that makes it possible for all kind of communities to create local networks with messaging services.

The device gained traction as the liminal state of the world, moving from crisis to crisis, made people receptive to a new robust network that was not run by big tech. In just one year, millions of devices were distributed, and, more importantly, it has been possible since 2030 to connect from the Nordkapp to Tarifa and from rural Ireland to the Donbas, even reaching into Asia. And it is the first network to show similar uptake in the global south.

Apple

With all the economic crises that followed in 2026, the US-Iran conflict, the already-started trend toward a declining market for premium phones, Apple had some rough years. They had been planning for a new type of device in the early 2020s that moved away from the phone as a form factor; the first signal was the iPhone Air, which proved that the core technical ingredients fit a small footprint, the camera bump. After the bust of the Apple Vision devices, the company can capitalize on its new success, and with the new AirTag, it launched a personal device that fully leverages the cloud services infrastructure. A special T1 chip was launched to create a platform for physical AI, a form that emerged in the late 20s as a new form of AI. With the Tag, services can be connected to every device you like. One thing that is not possible yet is to connect to the internet without a phone nearby.

In 2032, Apple decided to start a collaboration with Fairmesh, not by fully acquiring but by opening their AirTag with a mesh chip and an AirTag Plus with voice-based communication devices. The Fairmesh is also expected to be added to a new version of the Apple Watch.

About

These are rough thoughts about the state of cities of things, as part of making sense of the current state, and part of the process of formulating a new manifesto and design principles for Cities of Things that aimed to be completed in May 2026. Written between 10-17 April, and used as resource for the first version is discussed at the event on 24 April.

More to follow!